Did you ever wonder what the world would be like if a

notable person had never been born? Did

you ever realize the futility of that musing and wonder how it is that the work

of one person could shape the whole of humanity in profound ways far beyond

their intentions? Well, today we

celebrate a birthday. No, not mine! And my how I loved turning 50 this past week

and the great celebration that attended the event. No, the birthday in question was 190 years

earlier – April 30, 1777 to be exact.

Like me, he was intrigued with mathematics. Like me, he taught about magnetism and the

universal principles associated therewith.

And like me, he was fascinated by light and the manipulation of light

through lenses to understand its essence.

But unlike me, he thought that, “the world would be nonsense, the whole

creation an absurdity without immortality.”

The birthday we celebrate today was none other than Johann

Carl Friedrich Gauss. And the reason why

I care about his birthday is singular.

He gave humanity one of its most toxic cognitive forms of bondage. And together with his French collaborator (of

sorts) Adrien-Marie Legendre, Gauss is unconsidered at our collective great

peril.

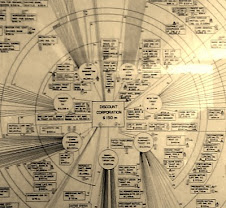

In an effort to predict astronomical movements – principally

the orbits of planets – Gauss developed a computation known as the Gaussian

gravitational constant which is built on a mathematical notion of least squares. This algebraic notion – that observable

phenomenon can lead to forward predictions and thereby minimize measurement

error – was innocent enough for its immediate application. When one is trying to figure out where a

planet is going to be in three days hence, this math had its utility. But, like other astronomical innovations,

Gauss’ work unleashed a toxic divination

impulse that has become the root of our modern scientific inquiry and the bane

of humanity. The notion of prediction

based on linear regression.

Now some of you hate math and, acknowledging that, let me

explain something. As the abject failure of pundits and analysts have shown in the 2016

U.S. Presidential election, if you measure consensus assumptions, your

conclusions are entirely wrong. In 2006

and 2007, I correctly described the conditions and the timing of the Global

Financial Crisis in 2008[1]. Was I forecasting an outcome using predictive

analytics? No. I was merely observing irrefutable documented

behaviour in an occult industry and critiquing the system level convergence

that was certain. From mass pandemics

(the Asian bird flu) to resource shocks to social paroxysm (the Egyptian

multi-coups), the “trained” and the “expert” are left agape when linear

regression behaviour is punctuated by disequilibrium events. Regrettably, education’s obsession with the

scientific method have taught regression but have assiduously ignored its

dominant fallacy – that we know the variables that matter and

we recognize

that which is significant. Elementary

statistics teach us that interrogatory inquiry presupposes:

1.

Known

variables;

2.

Known scale in

which these variables operate;

3.

And

Measurement Error.

Interestingly, the

same discipline teaches us the error of untested assumptions about normalcy,

kurtosis, skewness, and orthogonality.

However, the modern education system and the scientific method upon

which it is built fails to account for these in every instance diminishing the

efficacy of social and technical interventions.

Put another way, in the world of obsessive prediction and outcomes,

we rely on our elementary algebra which seeks to solve for y in the classic

linear predictive model:

y= mx +b

where y is the expected outcome; x is the variable(s) we think have an

association with an observable; m is

the scale or range in which x operates;

and, b is unexplained variance. This formula presumes that we know both the

association between observations and effects (an entirely fallacious

assumption), we understand the scale in which variables operate (an entirely

untested assumption), and that the remainder is “unexplained”. We don’t hold the possibility that the entire

ontology projected in regression may in fact be prima facie false.

Here's the

problem. We are not conditioned to ask

any of the fundamental assumptions that underpin the error of statistical divination. We want to “know” what’s going to

happen. We want to “control” outcomes by

manipulating variables. But what we

constantly ignore is the fact that the human analog experience does not happen

on 2-dimensional scatterplots through which lines can be drawn. Every struggle you’ve had; every emotional

pain; every sense of loss; is based largely on the fact that you projected a

plane in a dimension around which you built a narrative. Often those narratives involved others. But they had their narratives, their

frameworks, their projections. And just

because a dot showed up in your world and a dot showed up in mine doesn’t mean

that the lines that you connect and the lines I connect go in the same

direction. In fact, it’s certain that

they do not. So one day, using the exact

“same” data, we arrive at different conclusions. And then we expend amazing energy trying to

re-narrate what we didn’t understand in the first place.

Gauss gave us a 2-D

god-complex in a multi-dimensional world.

And as long as we subscribe to either of those features (the error of

2-D or the god-complex) we insure nothing but pain for our existence. Our obsession with the “future” is nonsensical. Making up stories and myths about immortality

– a prerequisite for Gauss – implies that the present is insufficient. And with that measurement error, all other

measurements (and the measurers) will be disappointments.

.jpg)

This resonates with some thoughts that Nissim Taleb writings seem to point towards. Very interesting

ReplyDeleteThanks again Dave

You expressed it very well. It's also my observation that we humans have a problem to just observe and feel.. We seem to have an unexplicable need to treat whole phenomena onto as tiny pieces as possible and try to forcepack everything into predictable packages. This robs us of entire dimensions of understanding.

ReplyDeleteI got a laugh when I saw where it was going-thanks for expanding my mind and making me smile!!

ReplyDeleteHello Dave, when are u2 coming to speak in Australia, Queensland, we await your arrival with excitement and butterflies 🦋

ReplyDelete